AKS Just Went GA: What Enterprise Teams Need to Know Before Going All-In

In this article

Microsoft just announced Azure Kubernetes Service general availability. AKS replaces the older ACS (Azure Container Service) as the managed Kubernetes offering on Azure. The control plane is fully managed by Microsoft - and free. You pay only for the worker node VMs, storage, and networking your workloads consume.

That pricing model is compelling. No cluster management fee, no hidden control plane costs. Microsoft keeps the API server, etcd, scheduler, and controller manager running. But “managed” doesn’t mean “simple.” We’ve been running AKS in preview for enterprise clients, and the gap between the quickstart tutorial and a production-grade deployment is substantial. This post covers the decisions that matter.

Networking: The First Decision That Shapes Everything

AKS offers two networking models, and the choice affects IP address planning, network policy support, and how your pods communicate with the rest of your Azure environment.

Kubenet is the default. Pods get IP addresses from a range that exists only inside the cluster. It conserves IP addresses because only the nodes need VNet IPs - pods use a separate overlay range.

Azure CNI assigns every pod a real IP address from your Azure VNet subnet. Pods become first-class network citizens - reachable directly from other VNet resources, on-prem networks via ExpressRoute, and peered VNets without NAT or routing tricks.

# Create AKS cluster with Azure CNI

az aks create \

--resource-group myResourceGroup \

--name myAKSCluster \

--network-plugin azure \

--vnet-subnet-id /subscriptions/<sub>/resourceGroups/<rg>/providers/Microsoft.Network/virtualNetworks/<vnet>/subnets/<subnet> \

--docker-bridge-address 172.17.0.1/16 \

--dns-service-ip 10.2.0.10 \

--service-cidr 10.2.0.0/24Azure CNI sounds better on paper. In practice, it creates an IP address planning nightmare. A /24 subnet gives you 251 usable addresses. With the default of 30 pods per node, a single node consumes 31 IPs. That /24 only supports about 8 nodes. Plan for CNI by over-sizing subnets aggressively - a /21 gives you roughly 2,000 IPs. Getting this wrong early means re-deploying the cluster, because you can’t resize the subnet of a running AKS cluster.

Use kubenet when you don’t need direct pod-to-VNet connectivity. Use Azure CNI when pods need to be reachable from outside the cluster, when you need Azure Network Policies, or when regulatory requirements demand routable IPs.

Azure docs: Kubenet networking · Azure CNI networking

RBAC and Azure AD Integration

Kubernetes has its own RBAC system, and by default AKS uses client certificate authentication. That works for a small team but falls apart in an enterprise where you need centralised identity management, conditional access policies, and audit trails tied to corporate identities.

AKS supports Azure AD integration (now called Microsoft Entra ID) for authentication. Users authenticate with corporate credentials and get mapped to Kubernetes RBAC through group memberships.

# ClusterRoleBinding mapping Azure AD group to cluster-admin

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: aks-cluster-admins

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: Group

name: "<azure-ad-group-object-id>"

apiGroup: rbac.authorization.k8s.ioThis integration isn’t optional for enterprise deployments. Without it, you end up managing service accounts manually and sharing kubeconfig files. Enable Azure AD integration from day one - retrofitting it onto an existing cluster is painful.

Azure docs: AKS-managed Azure AD integration · Kubernetes RBAC with Azure AD

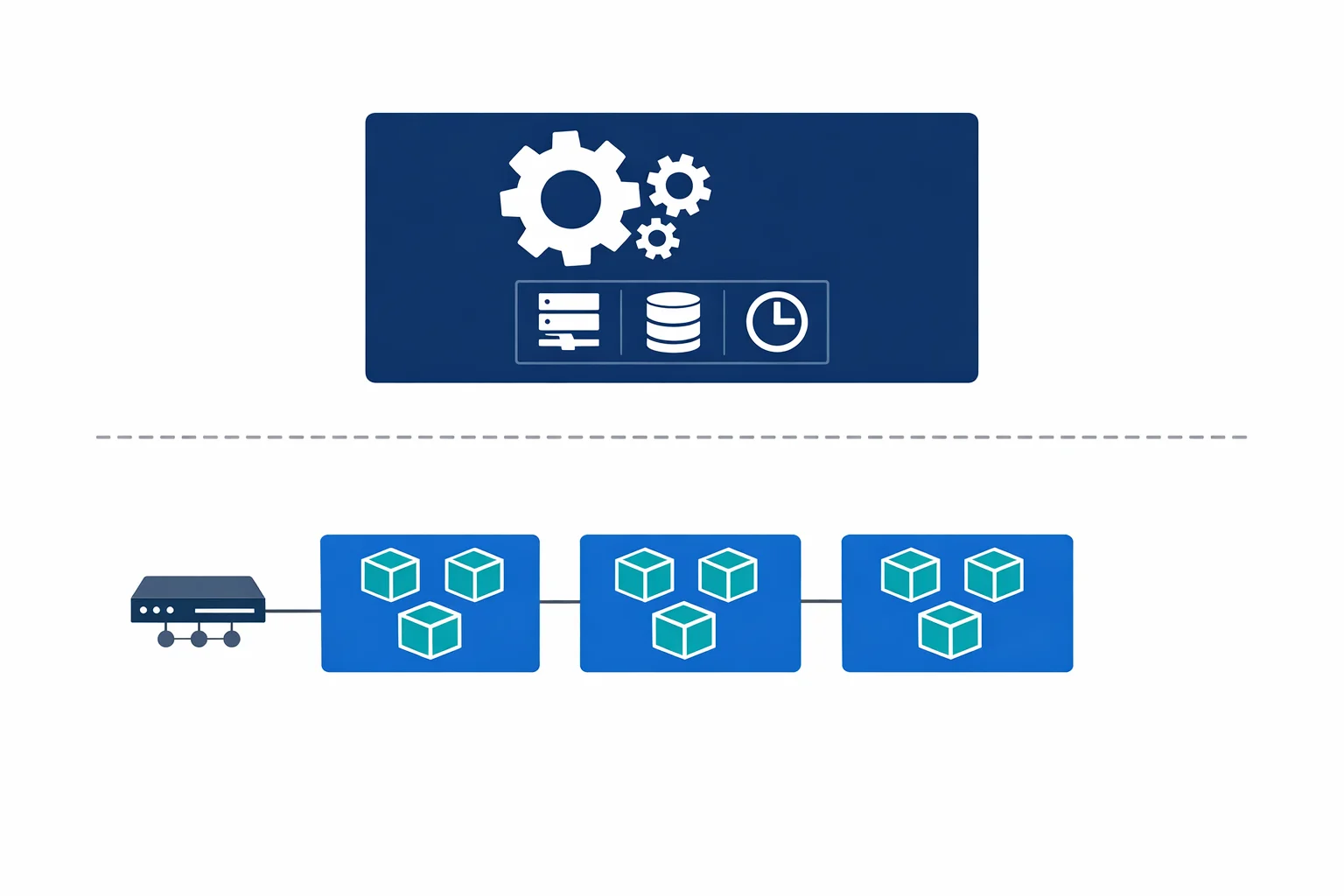

Node Sizing and Scaling

AKS GA launches with a single node pool per cluster. Pick your VM size carefully - changing it later means creating a new cluster or a new node pool (multi-pool support is on the roadmap).

For production, use at least three nodes for redundancy across availability zones. Size the VMs based on your workload profile:

# Create a cluster with 3 nodes sized for general workloads

az aks create \

--resource-group myResourceGroup \

--name myAKSCluster \

--node-count 3 \

--node-vm-size Standard_D4s_v3 \

--enable-cluster-autoscaler \

--min-count 3 \

--max-count 10The cluster autoscaler adjusts node count based on pending pod requests. Set a minimum that covers your baseline traffic and a maximum that caps your compute spend. Without autoscaling, you’re either over-provisioned (wasting money) or under-provisioned (dropping requests during peaks).

Use node selectors and taints in your pod specs to control where workloads land. Even with a single node pool, you can label nodes and schedule critical services away from batch workloads.

Azure docs: Scale an AKS cluster · Cluster autoscaler

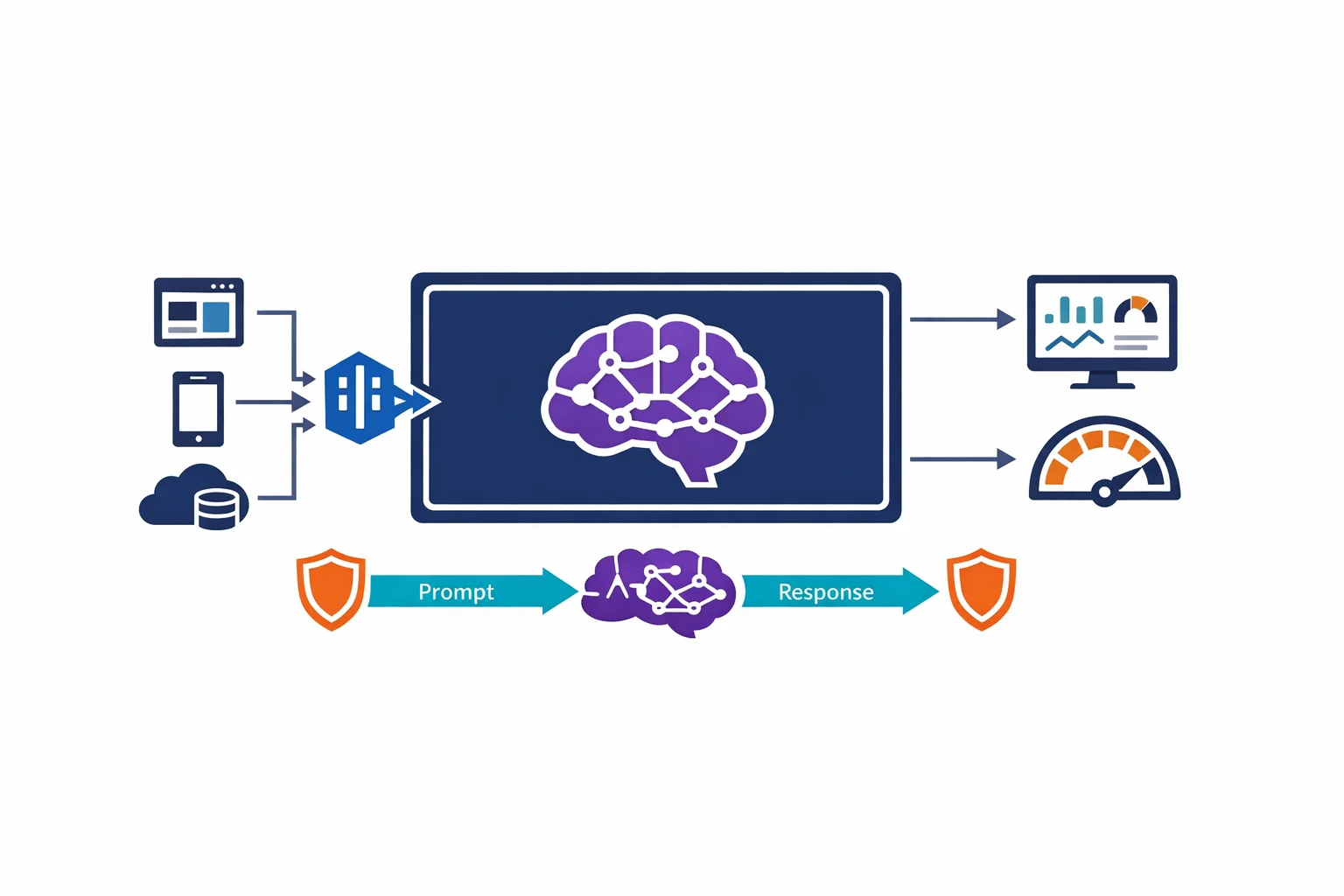

Monitoring: You Can’t Operate What You Can’t See

Azure Monitor for containers integrates directly with AKS to collect metrics, logs, and inventory data from your cluster. Enable it during cluster creation - not three months later when something breaks at 2 AM.

The stack gives you node metrics, pod metrics, container logs (stdout/stderr), and Kubernetes event logs - all queryable via KQL in Log Analytics. But monitoring alone won’t save you. Resource requests and limits on every container are non-negotiable in production.

# Always set resource requests and limits

resources:

requests:

cpu: "250m"

memory: "256Mi"

limits:

cpu: "500m"

memory: "512Mi"Without requests, the scheduler can’t make intelligent placement decisions. Without limits, a single misbehaving container can consume all resources on a node and take down every other pod running there. We’ve seen this happen - a memory leak in one service caused cascading failures across an entire node pool because nobody set memory limits.

Set requests based on steady-state usage. Set limits based on peak usage. Monitor actual consumption for two weeks, then adjust.

Azure docs: Azure Monitor for containers · Resource management best practices

Ingress: NGINX vs Application Gateway

Getting traffic into your cluster requires an ingress controller. AKS doesn’t ship with one by default - you choose and deploy it yourself.

NGINX Ingress Controller is the community standard. Battle-tested across every Kubernetes distribution, it supports the full range of ingress annotations. Most teams should start with NGINX.

Application Gateway Ingress Controller (AGIC) uses Azure Application Gateway as the ingress. You get Azure-native WAF, SSL termination, and integration with Azure networking. The downside is added complexity - the Application Gateway lives outside the cluster, configuration sync can be slow, and debugging requires understanding both Kubernetes ingress and Application Gateway rules.

# Install NGINX Ingress Controller via Helm

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

helm repo update

helm install ingress-nginx ingress-nginx/ingress-nginx \

--namespace ingress-nginx \

--create-namespace \

--set controller.service.annotations."service\.beta\.kubernetes\.io/azure-load-balancer-health-probe-request-path"=/healthzUse NGINX if you want a straightforward, well-documented ingress solution. Use AGIC if you need WAF capabilities or your organisation already standardises on Application Gateway.

Azure docs: NGINX Ingress Controller on AKS · Application Gateway Ingress Controller

When AKS Is Overkill

This is the section most AKS blog posts skip. Before choosing AKS, answer these questions honestly:

- Do you have more than five services that need independent scaling and deployment?

- Do you need custom scheduling, operators, or CRDs?

- Do you have at least one engineer dedicated to platform operations?

- Are you deploying a single API that would run fine on App Service?

Most teams we talk to don’t need Kubernetes. A .NET API with a SQL database doesn’t need pod autoscaling, service discovery, and rolling deployments across node pools. App Service handles that with a fraction of the complexity. If your team spends more time managing the cluster than building features, you chose the wrong platform.

The Operational Reality

AKS is managed, but “managed” covers the control plane only. Kubernetes version upgrades, node OS patching, certificate rotation, and network policy maintenance are all on you. None of this is insurmountable. All of it requires dedicated time from someone on your team. Factor this operational cost into your platform decision before you commit.

The Takeaway

AKS going GA is a milestone for Azure’s container story. The managed control plane, Azure AD integration, and multi-node-pool support make it a legitimate enterprise Kubernetes platform. But the decision to adopt it should be driven by your workload requirements and your team’s operational capacity - not by industry hype.

Get the networking model right on day one. Enable Azure AD integration before your first production deployment. Set resource requests and limits on every container. And most importantly - make sure you actually need Kubernetes before you build your architecture around it.

Looking for Azure architecture guidance?

We design and build Azure foundations that scale - landing zones, networking, identity, and governance tailored to your organisation.

More from the blog

Container Apps vs AKS vs App Service: A Decision Framework

Azure AD Is Now Entra ID: What Actually Changed and What You Need to Update