What an Azure Landing Zone Audit Actually Finds

In this article

- Management Group Hierarchies That Grew Without a Plan

- Policy Assignments That Nobody Maintains

- Private Endpoint DNS That Doesn’t Work Properly

- Identity Architecture That Stopped Evolving

- Network Over-Engineering

- Cost Tagging That Doesn’t Produce Usable Reports

- Typical Remediation Timeline

- What a Platform Health Check Produces

In short: After reviewing dozens of enterprise Azure environments, the same six problems appear repeatedly: over-engineered management groups, unmaintained policies, broken private endpoint DNS, stale identity configurations, network sprawl, and cost tags that nobody trusts. Most are fixable in 3-5 weeks with a structured remediation plan.

We review Azure environments for a living. Production platforms that have been running for two, three, sometimes five years. The original architects have moved on, three different teams have layered decisions on top of each other, and the documentation stopped being updated somewhere around month six.

After doing this repeatedly across mid-size and large enterprises, the findings fall into the same areas. Azure landing zones are deceptively easy to deploy and genuinely hard to maintain. The initial deployment gets attention, budget, and a project team. The years that follow get whatever time the platform team can spare.

Here is what we consistently find.

| Finding | Typical severity | How common |

|---|---|---|

| Management group over-engineering | Medium | 70%+ of audits |

| Unmaintained policy assignments | High | Nearly universal |

| Private endpoint DNS failures | Critical | 60%+ of audits |

| Identity architecture gaps | High | 80%+ of audits |

| Network sprawl and stale rules | Medium | 70%+ of audits |

| Cost tagging that finance ignores | Medium | Nearly universal |

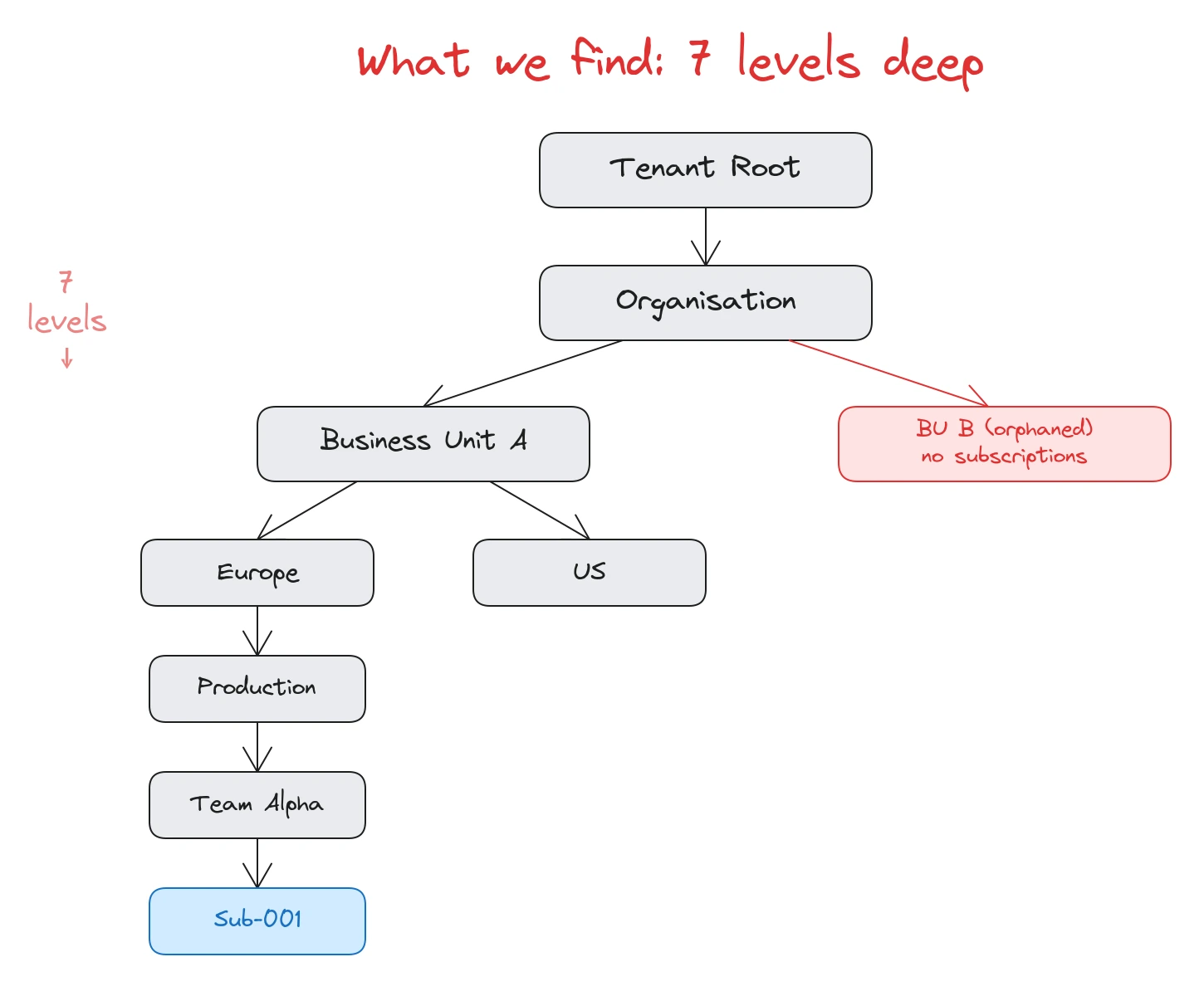

Management Group Hierarchies That Grew Without a Plan

The Azure Landing Zone reference architecture recommends three to four levels of management groups. That is enough for virtually every organisation. We wrote about this in our original landing zone lessons and again when we covered what matters in mature environments.

What we find in practice: six, seven, sometimes eight levels. Extra tiers for business units, then sub-tiers for regions, then sub-sub-tiers for environments. Each level made sense to whoever added it. The cumulative result is a hierarchy that nobody can draw from memory.

The real damage isn’t cosmetic. Policy inheritance becomes unpredictable. RBAC assignments at one level contradict assignments at another. When a deployment fails because a policy at the fourth level overrides an exemption at the sixth level, the debugging path runs through every parent group. We regularly spend full days tracing policy evaluation chains that should take minutes.

Orphaned management groups are common too. A division was restructured, the subscriptions moved, but the empty management group and its policy assignments stayed. Nobody deleted it because nobody was sure it was safe to delete.

The fix is almost always to flatten. Remove levels that exist for organisational vanity rather than concrete governance requirements. If a management group doesn’t carry a policy assignment or RBAC boundary that differs from its parent, it probably shouldn’t exist.

Policy Assignments That Nobody Maintains

A freshly deployed landing zone typically ships with a curated set of Azure Policy assignments: deny public IPs, enforce encryption, require tags, deploy diagnostic settings. These are correct and valuable. The problem starts around month eight.

New compliance requirements arrive. Someone adds a custom policy for naming conventions. Another team creates a policy for region restrictions. A third person copies a policy definition from a blog post. Exemptions get granted for workloads that can’t comply yet. More exemptions follow. Expiry dates get set but never tracked.

After two years, a typical environment has 150 to 300 policy assignments across the hierarchy, 30 to 60 exemptions (a third of which have expired dates that nobody noticed), and 20+ custom policy definitions, some of which duplicate built-in policies that Microsoft shipped after the custom ones were written.

The compliance dashboard tells the story. We regularly see management groups at 55-65% compliance. When we ask when someone last reviewed the compliance numbers, the answer is usually a blank stare or “we check it when something breaks.”

Policy hygiene requires the same discipline as code hygiene. Quarterly reviews, deduplication against the expanding built-in library, removal of stale exemptions, and a documented process for granting new ones. Most organisations have none of this.

Pattern: Management groups and policies are governance infrastructure. They get built once and maintained never. The gap between intended governance and actual governance widens every quarter.

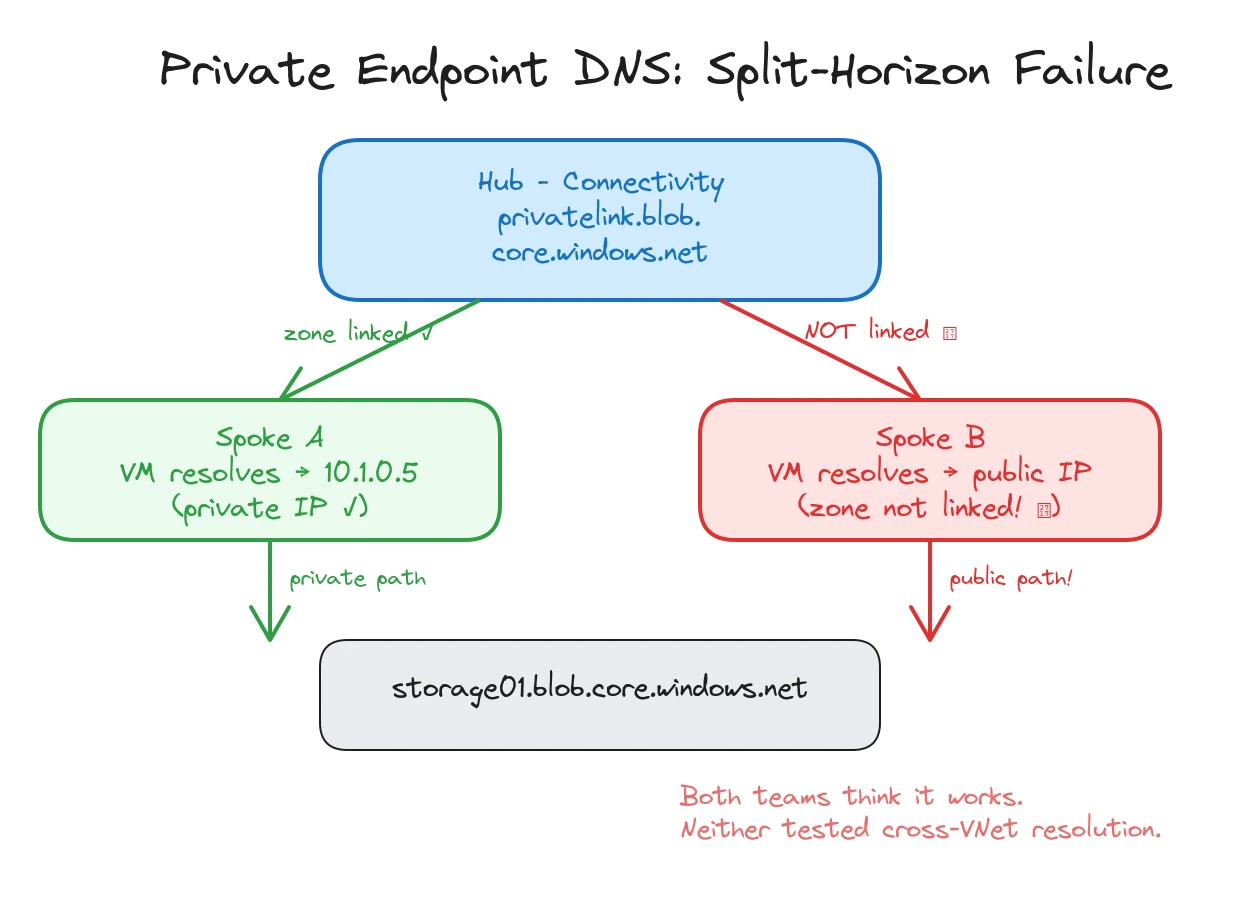

Private Endpoint DNS That Doesn’t Work Properly

Private endpoints are everywhere in modern Azure. Every storage account, key vault, SQL database, and container registry that needs to stay off the public internet gets a private endpoint. Each one needs a DNS record in the right private DNS zone so that clients resolve the service name to the private IP instead of the public one.

The recommended pattern is clear: centralised private DNS zones in the connectivity subscription, linked to all relevant VNets, with Azure Policy creating DNS records automatically when private endpoints are created.

What we actually find falls into three categories.

Missing zones. Someone created a private endpoint for a Cosmos DB account but the privatelink.documents.azure.com zone doesn’t exist in the connectivity subscription. The endpoint works from machines that use the VNet’s DNS, but resolution falls back to public DNS for anything outside that VNet. The data path goes over the Microsoft backbone either way, but the security team doesn’t know their “private” endpoint resolves publicly from half the network.

Split-horizon failures. The private DNS zone exists and is linked to some VNets but not others. Teams in VNet A resolve the storage account to its private IP. Teams in VNet B get the public IP. Both teams think everything is working. Nobody has tested cross-VNet resolution.

Policy gaps. The DeployIfNotExists policy that should create DNS records is assigned, but its managed identity doesn’t have Contributor rights on the private DNS zone resource group. The policy evaluates as compliant (the assignment exists) but never actually creates records. This one is surprisingly common and difficult to spot without testing actual name resolution.

The DNS audit is often the most time-consuming part of a landing zone review, and it produces the most urgent findings. A broken DNS configuration can mean traffic that the security team believes is private is actually resolving through public endpoints.

Identity Architecture That Stopped Evolving

Azure identity patterns have changed significantly since most landing zones were deployed. Privileged Identity Management (PIM), Conditional Access, and workload identity federation are all table stakes in 2025. We covered these shifts in our 2026 landing zone update.

What we find in audits is that the identity configuration reflects the year it was set up, not the current state of the art.

Standing Contributor and Owner assignments on production subscriptions are the most common finding. No PIM, no just-in-time activation, no approval workflow. The security team knows PIM exists but “hasn’t gotten to it yet” because the rollout requires touching every subscription.

Shared service accounts with client secrets stored in key vaults are the second most common. CI/CD pipelines authenticate using long-lived secrets that were created two years ago and have never been rotated. Workload identity federation would eliminate these secrets entirely, but the migration requires changes to every pipeline.

Workload identities are another blind spot. App registrations and service principals accumulate over time with no ownership tracking. We find dozens of app registrations where the original creator has left the organisation, nobody knows what the application does, and the credentials are still active. Applications that run on Azure compute but authenticate to other Azure services using client secrets instead of managed identity add unnecessary credential management overhead and rotation risk. Managed identity eliminates the secret entirely, but the migration requires identifying every authentication flow.

Conditional Access gaps round out the picture. Policies exist for end users but not for administrative access. Or they exist but don’t cover device compliance, so an admin can activate a Global Admin role from an unmanaged personal laptop.

None of these are exotic findings. They are well-documented best practices that require dedicated effort to implement. That effort rarely gets prioritised when the platform team is busy fighting fires elsewhere.

Pattern: DNS and identity are the two areas where the gap between “it was set up correctly once” and “it still works correctly today” is largest. Both require periodic validation, not just initial configuration.

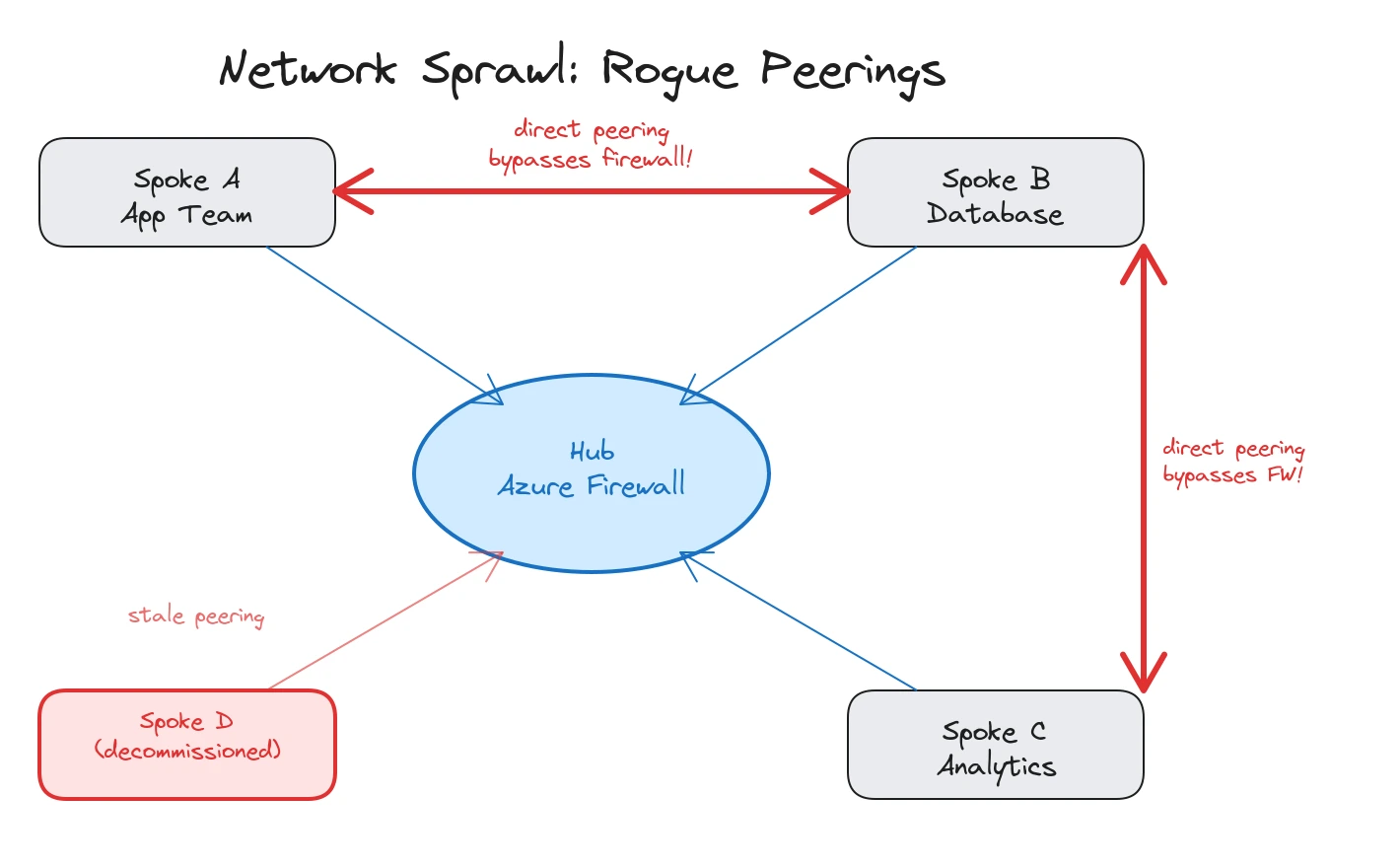

Network Over-Engineering

Hub-spoke is the correct default topology, and most enterprises we audit have it deployed. The problem isn’t the topology. It’s what happened after the initial deployment.

Unused spokes are common. A project was planned, a spoke VNet was provisioned and peered to the hub, but the project was cancelled or moved to a different approach. The peering stays, the address space is allocated, and the hub firewall has rules for traffic that will never arrive.

Peering meshes are worse. Someone needed spoke-to-spoke communication and, instead of routing through the hub firewall, added direct peerings. Then another team did the same. After a few rounds, the network diagram looks like a plate of spaghetti and nobody can answer the question “can VNet A talk to VNet B?” without tracing every peering and route table.

Firewall rules accumulate in the same way as policy assignments. Rules get added for new workloads but never removed when workloads are decommissioned. We routinely find Azure Firewall rule collections with 200+ rules, a third of which reference IP addresses that no longer exist. The last rule review date is either “never” or “when we deployed it.”

The network audit isn’t about finding the wrong topology. It’s about finding the gap between the intended design and what actually exists after two years of incremental changes without cleanup.

Cost Tagging That Doesn’t Produce Usable Reports

Every landing zone enforces tags. Cost centre, environment, owner, application. The policy is in place, the tags exist on resources, and the FinOps team has dashboards in Cost Management. On paper, cost allocation works.

In practice, three problems recur.

Tags on resources but not on resource groups. Azure Policy can enforce tags at the resource group level and use the Modify effect to inherit them down to resources, but many organisations enforce only at the resource level. Cost Management reports that group by resource group then show inconsistent tag values across the resources inside, making cost allocation unreliable.

No tag inheritance enabled. Azure supports tag inheritance policies that copy tags from resource groups or subscriptions down to resources. Most organisations don’t use them. The result is that a resource group tagged with cost centre “CC-5678” contains resources tagged with “CC-1234” because the resource was moved from a different group and its tags were never updated.

Stale values. Departments restructure, projects end, cost centres change. The tags stay. After a couple of years, 15-20% of cost centre tags reference codes that no longer exist in the finance system. The FinOps reports show spend against phantom departments, and the finance team stops trusting the numbers.

Tag accuracy is a data quality problem, not a policy problem. Policy can enforce tag presence and apply inheritance patterns, but it cannot validate whether a cost centre still exists in your finance system or whether the listed owner still works at the company. Enforcement at creation time is necessary but not sufficient. You need periodic reconciliation against the authoritative source (and that source is usually outside Azure), automated correction where possible, and a process for handling mismatches.

Typical Remediation Timeline

Fixing a landing zone takes more than a single sprint. Based on what we see across engagements, a realistic timeline looks like this:

- Week 1: Architecture assessment and findings report. Walk through the environment, document the gaps, and prioritise by severity and effort.

- Week 2: Governance baseline. Flatten management group hierarchy, remove stale policy assignments, consolidate duplicate definitions.

- Weeks 3-4: Identity and policy remediation. Roll out PIM for standing privileged access, migrate workload identities to managed identity or federated credentials, close Conditional Access gaps.

- Week 5 onwards: Network simplification and cost optimisation. Clean up unused peerings, consolidate firewall rules, fix DNS zone coverage, and address tagging accuracy.

Each phase builds on the previous one. Governance cleanup makes policy remediation cleaner, and identity fixes reduce the blast radius before network changes begin.

What a Platform Health Check Produces

A structured landing zone audit produces concrete, actionable deliverables:

- Management group hierarchy assessment with specific recommendations to flatten or restructure, including impact analysis for each change

- Policy health review: duplicate definitions, stale exemptions, compliance gaps by management group, and a prioritised remediation backlog

- Private endpoint DNS audit: zone inventory, VNet link coverage, policy effectiveness testing, and a list of endpoints that resolve publicly when they shouldn’t

- Identity posture report: standing privileged access inventory, PIM readiness assessment, workload identity migration candidates, and Conditional Access gap analysis

- Network topology review: unused spokes and peerings, firewall rule hygiene, and a current-state diagram compared against the intended design

- Cost tagging assessment: tag coverage and accuracy rates, orphaned tag values, and recommendations for inheritance and reconciliation

Each finding comes with a severity rating and a remediation path. The goal is a backlog that the platform team can execute against, not a list of observations.

If your landing zones have been running for more than a year without a structured review, there are findings waiting. The question is whether you find them proactively or discover them during an incident.

Typical engagement: When reviewing Azure platforms we usually start with a structured Azure architecture assessment covering landing zone structure, management group hierarchy, policy health, identity posture, network topology, and cost tagging. The assessment produces a prioritized findings report with severity ratings and a remediation roadmap. Most reviews take 2-3 weeks.

Related: Azure Landing Zones in 2026 and What Actually Matters Now day-2 operational challenges · Azure Policy Guardrails That Developers Don’t Hate enforcement without friction · Why Your Azure Bill Is High Even When Your Resources Are Right-Sized cost leaks audits miss · Azure DNS Private Resolver replacing hub DNS VMs

Looking for Azure architecture guidance?

We design and build Azure foundations that scale - landing zones, networking, identity, and governance tailored to your organisation.

More from the blog

Azure Landing Zones in 2026 and What Actually Matters Now

Shared vs Separate Azure Hubs for Regulated Workloads Under NIS2 and DORA