From Kubernetes to $0.50/month: Migrating a Real-Time App to AWS Serverless

In this article

- The Before: What I Was Running

- The After: Fully Serverless on AWS

- Key Decision: API Gateway WebSocket API

- DynamoDB: Two Tables, Zero Maintenance

- Rooms Table

- Connections Table

- The Broadcast Pattern: No Rooms, Just Queries

- CloudFormation: Everything in One Template

- SPA Routing Without a Server

- Cold Starts: The One Trade-off

- What I Decommissioned

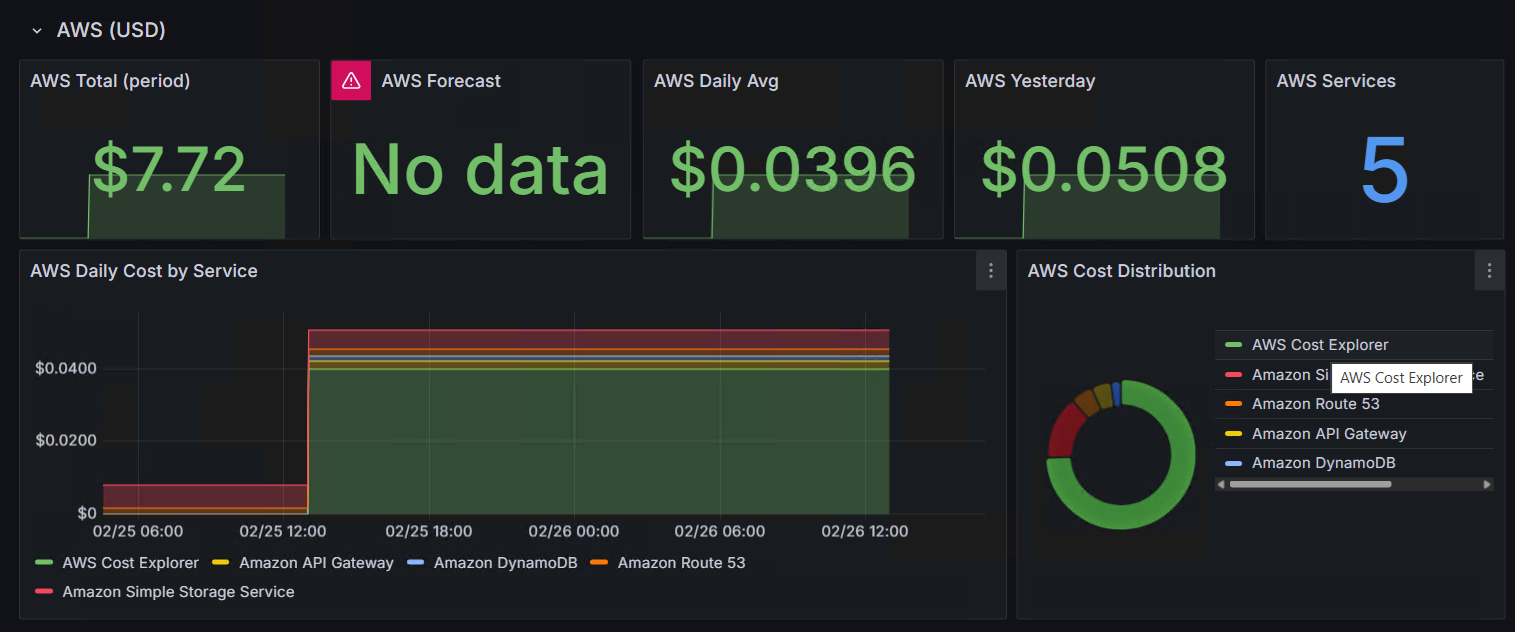

- Cost Comparison

- When Serverless WebSocket Makes Sense

- Lessons From the Migration

I had a planning poker tool running on my k3s cluster. Node.js, Express, Socket.io, in-memory state. It worked. It also consumed resources on a node that was doing more important things, lost all state on pod restart, and required a reverse proxy configuration, TLS certificates, and network policies just to exist.

You can try the result at poker.genicot.eu - zero login, zero friction.

The question was simple: can I move this to serverless, keep the same real-time experience, and pay essentially nothing?

The answer: $0.50/month, and that is just the Route53 hosted zone fee. Everything else fits within AWS free tier.

The Before: What I Was Running

A classic Node.js real-time stack: Express with Socket.io for WebSocket connections, a JavaScript Map in memory for all state (rooms, participants, votes, all gone on restart), and vanilla HTML/CSS/JS served by Express. It ran as a Kubernetes pod behind an NGINX reverse proxy with ModSecurity WAF, OAuth2-Proxy sidecar, and cert-manager for TLS, locked down with NetworkPolicy rules for namespace isolation and dedicated egress rules.

For a planning poker tool used by a handful of people per week, all of that was overkill. The pod sat idle 99% of the time, consuming memory and CPU on the cluster. The NGINX configuration alone was 50+ lines of location blocks, proxy headers, and WebSocket upgrade handling.

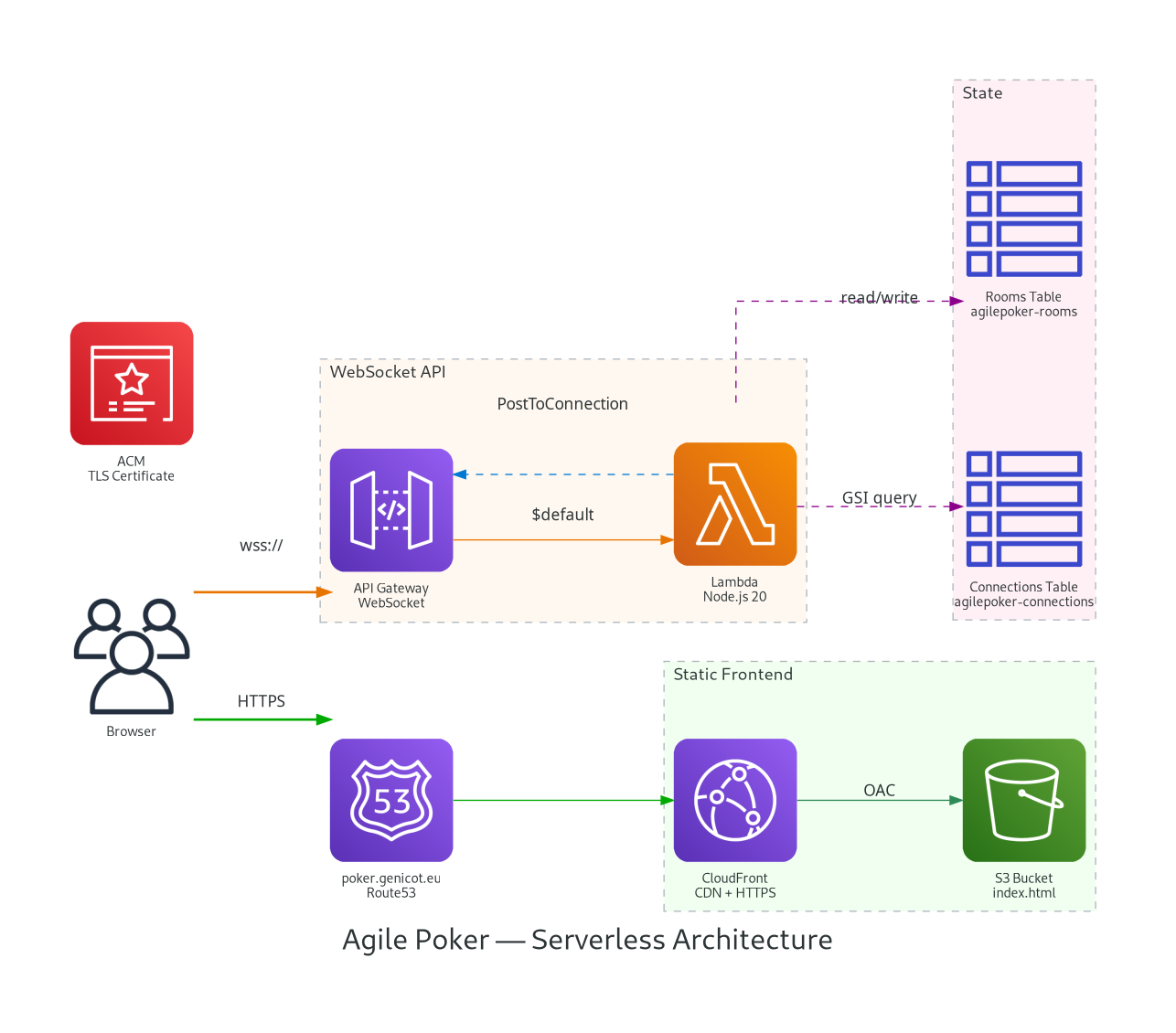

The After: Fully Serverless on AWS

Four components replaced the entire stack:

| Service | Purpose | Cost |

|---|---|---|

| S3 + CloudFront | Frontend static hosting with CDN | Free tier |

| API Gateway (WebSocket) | Managed WebSocket connections | Free tier (1M msgs/mo) |

| Lambda (Node.js 20, 128MB) | All business logic | Free tier (1M req/mo) |

| DynamoDB (on-demand) | Room + connection state | Free tier (25 RCU/WCU) |

Straightforward wiring:

Browser

|

+-- Static assets (HTML/CSS/JS) --> CloudFront --> S3

|

+-- WebSocket (wss://) --> API Gateway WebSocket API --> Lambda --> DynamoDBNo servers. No containers. No reverse proxy. No certificate management (ACM auto-renews). No network policies. No pod scheduling concerns.

Key Decision: API Gateway WebSocket API

Real-time communication was the critical choice. Socket.io provides nice abstractions - rooms, namespaces, automatic reconnection, fallback to long-polling - but it requires a persistent server.

API Gateway’s WebSocket API gives you managed WebSocket connections without a server. On the plus side: zero infrastructure to manage, automatic scaling via Lambda concurrency, connection management handled by AWS, and pay-per-message pricing (effectively free at my scale). I gave up Socket.io’s room abstraction (replaced with DynamoDB queries), automatic reconnection (replaced with exponential backoff in the client), and server-side emit (replaced with PostToConnection API calls).

Migrating from Socket.io to native WebSocket was less painful than expected. The client side went from:

// Before: Socket.io

const socket = io('https://poker.genicot.eu');

socket.emit('create-room', { userName: 'Alice' });

socket.on('room-created', (data) => { ... });To:

// After: Native WebSocket

const ws = new WebSocket('wss://xxx.execute-api.eu-west-1.amazonaws.com/prod');

ws.send(JSON.stringify({ action: 'create-room', userName: 'Alice' }));

ws.onmessage = (event) => {

const data = JSON.parse(event.data);

if (data.action === 'room-created') { ... }

};More verbose, but no dependencies. The entire frontend remains a single HTML file - no build step, no npm, no bundler.

DynamoDB: Two Tables, Zero Maintenance

In-memory state was convenient but fragile. With DynamoDB, state survives Lambda cold starts, and I get 24-hour TTL cleanup for free.

Rooms Table

| Attribute | Type | Purpose |

|---|---|---|

roomCode (PK) | String | Identifier like happy-tiger |

revealed | Boolean | Whether votes are visible |

currentTopic | String | What we are estimating |

cardScale | String | fibonacci, tshirt, or powers |

ttl | Number | Auto-expire 24h after creation |

Connections Table

| Attribute | Type | Purpose |

|---|---|---|

connectionId (PK) | String | API Gateway WebSocket connection ID |

roomCode (GSI) | String | Which room this connection belongs to |

userName | String | Display name |

vote | String/Null | Current vote value |

isHost | Boolean | Room creator flag |

ttl | Number | Auto-expire 24h |

A Global Secondary Index on roomCode makes the broadcast pattern possible. When someone votes, the Lambda:

- Updates the vote on their connection record

- Queries the GSI for all connections in the room

- Builds the state payload (participants, stats, revealed status)

- Fans out

PostToConnectioncalls to all participants in parallel

async function broadcastRoomState(apigw, roomCode) {

const [room, connections] = await Promise.all([

getRoom(roomCode),

getRoomConnections(roomCode) // GSI query

]);

const state = {

action: 'room-state',

roomCode,

participants: connections.map(c => ({

name: c.userName,

hasVoted: c.vote !== null,

vote: room.revealed ? c.vote : null,

// ... avatar, color, host/spectator flags

})),

revealed: room.revealed,

stats: room.revealed ? computeStats(connections) : null

};

// Fan-out to all connections

await Promise.all(

connections.map(c => sendToConnection(apigw, c.connectionId, state))

);

}Stale connections (when someone closes their browser without a clean WebSocket close) are automatically cleaned up: the PostToConnection call throws a GoneException, and I delete the connection record.

The Broadcast Pattern: No Rooms, Just Queries

Socket.io has a built-in concept of rooms. You join('room-123') and then io.to('room-123').emit(...) broadcasts to everyone in that room. Elegant.

With API Gateway + DynamoDB, I simulate this with the GSI. Every state change triggers a broadcast:

- Vote submitted -> update connection -> query all room connections -> fan-out

- Votes revealed -> update room -> query all room connections -> fan-out

- Participant joins -> create connection -> query all room connections -> fan-out

- Participant disconnects -> delete connection -> query remaining -> fan-out

Every action results in N+1 DynamoDB operations (1 write + N reads from the fan-out query) and N API Gateway PostToConnection calls. For a planning poker room with 5-15 participants, this is negligible. For a chat application with thousands of users per room, you would need a different approach (SQS fan-out, or EventBridge).

CloudFormation: Everything in One Template

One CloudFormation template defines everything. No CDK, no Pulumi, no Terraform - just YAML. For a project this small, CloudFormation is the right tool. It covers:

- DynamoDB tables (both with on-demand billing and TTL)

- Lambda function with IAM role (least-privilege: DynamoDB + API Gateway manage connections)

- API Gateway WebSocket API with

$connect,$disconnect, and$defaultroutes - S3 bucket with CloudFront OAC (Origin Access Control)

- CloudFront distribution with custom error responses for SPA routing

- Route53 A record aliased to CloudFront

- ACM certificate reference

A deploy script handles the orchestration: package Lambda, check ACM certificate status, deploy the stack, upload frontend with WebSocket URL injection, invalidate CloudFront cache.

SPA Routing Without a Server

Just a single index.html file serves as the frontend. Room URLs like poker.genicot.eu/happy-tiger need to resolve to that same file. On the old setup, NGINX handled this with a try_files fallback.

On CloudFront, the trick is custom error responses:

CustomErrorResponses:

- ErrorCode: 403

ResponseCode: 200

ResponsePagePath: /index.html

- ErrorCode: 404

ResponseCode: 200

ResponsePagePath: /index.htmlWhen S3 returns 403 (object not found via OAC) or 404 for /happy-tiger, CloudFront intercepts and returns index.html with a 200 status. The JavaScript in the page reads window.location.pathname and handles the routing.

Cold Starts: The One Trade-off

Lambda cold starts are the one real performance concern with this architecture. After a period of inactivity (typically 15-45 minutes), the first WebSocket action triggers a cold start. With Node.js 20 and 128MB of memory, I measured:

- Cold start: 200-500ms for the first action

- Warm invocation: 5-15ms

For a planning poker tool, a half-second delay on the first room creation of the day is imperceptible. The WebSocket connection itself is handled by API Gateway (no Lambda involved), so the connection is instant - only the first message has the potential cold start penalty.

If cold starts were critical, I could use provisioned concurrency ($2-3/month) or a simple EventBridge scheduled rule to ping the Lambda every 10 minutes. I did neither - the UX is fine as-is.

What I Decommissioned

On the Kubernetes side, removing the old deployment meant touching six files:

- Deleted the entire

apps/webapps/agile-poker/directory (Deployment, Service, ConfigMap) - Removed the

poker.confserver block from the NGINX reverse proxy ConfigMap - Removed the poker certificate from cert-manager

- Removed three volume mounts from the reverse proxy Deployment

- Removed the egress NetworkPolicy rule

- Removed the DNS record pointing to the cluster node

Removing the reverse proxy configuration was a particular relief - one less server block, one less TLS certificate, one less set of WebSocket proxy headers to maintain.

Cost Comparison

| Aspect | Kubernetes Deployment | Serverless |

|---|---|---|

| Compute | ~200m CPU, ~256Mi RAM (always on) | $0 (Lambda free tier) |

| State | In-memory (lost on restart) | DynamoDB (persists 24h, auto-cleanup) |

| TLS | cert-manager + Let’s Encrypt | ACM (zero config, auto-renew) |

| CDN | None (direct to pod) | CloudFront (global edge caching) |

| DNS | Cluster node IP | Route53 ($0.50/mo) |

| Total monthly | Shared k8s resources | ~$0.50/month |

| Availability | Single pod, restarts = downtime | Multi-AZ, managed by AWS |

| Scaling | Manual replica adjustment | Automatic (Lambda concurrency) |

Some free tier numbers for context: API Gateway gives 1 million WebSocket messages per month. Lambda gives 1 million requests and 400,000 GB-seconds. DynamoDB gives 25 read/write capacity units. My planning poker tool uses a tiny fraction of any of these.

When Serverless WebSocket Makes Sense

Serverless WebSocket fits well when you have low concurrent connections (tens to hundreds, not thousands), bursty traffic (active during meetings, idle otherwise), simple fan-out to a small group rather than pub/sub at scale, and cost sensitivity where paying for always-on compute is wasteful. You also get operational simplicity: no servers to patch, no containers to update.

It falls apart when you need persistent connections with guaranteed ordering (API Gateway WebSocket has a 10-minute idle timeout and 2-hour maximum connection duration), high fan-out to thousands of connections per message, sub-millisecond latency (DynamoDB + Lambda adds 5-50ms per operation), or complex real-time protocols like binary WebSocket frames, multiplexing, or custom heartbeats.

For my planning poker tool, the fit was perfect. The same approach would work for any small-group collaboration tool: retros, stand-up dashboards, team quizzes, live polls.

Lessons From the Migration

API Gateway WebSocket API is underrated. For small-scale real-time apps, it eliminates all the infrastructure complexity of WebSocket servers.

DynamoDB TTL handles cleanup automatically. Set a TTL on every item and forget about orphan data. Rooms, connections, votes - everything self-destructs after 24 hours.

A GSI-based broadcast pattern is simple and effective: query all connections in a room, fan-out PostToConnection. It won’t scale to 10,000 users per room, but it handles 50 easily.

Single-file frontends on S3 + CloudFront cost nothing. No server, no build step, no framework - just HTML, CSS, and JavaScript served from the edge.

After this migration, I stopped thinking about the tool entirely. No pods to schedule, no certificates to rotate, no network policies to debug. It just runs.

Try it at poker.genicot.eu. Create a room, share the link, and estimate. Zero friction, zero login, zero cost.

More from the blog

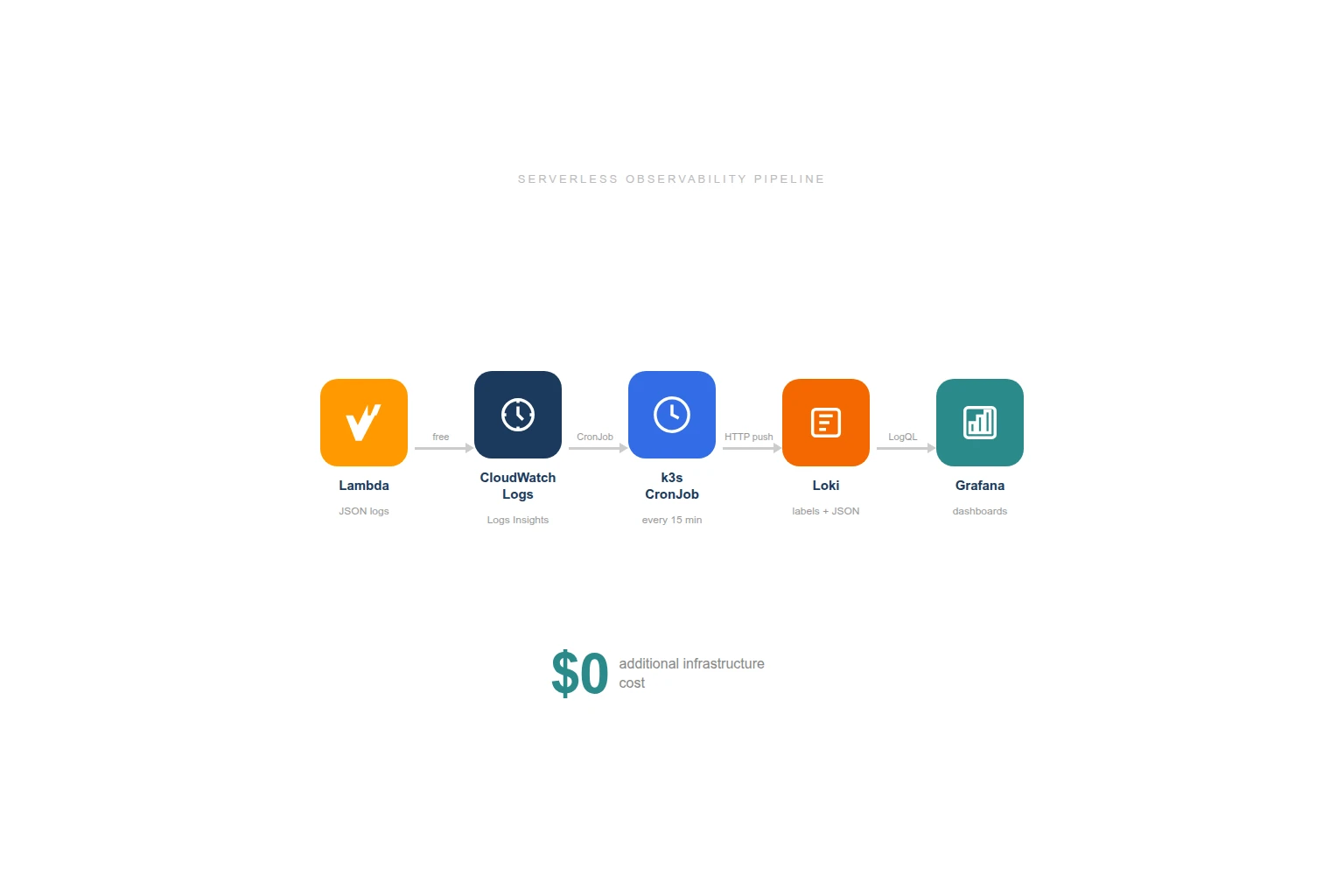

Serverless Observability for $0: CloudWatch Logs to Grafana via k3s CronJobs

Azure Static Web Apps: The Jamstack Platform Azure Was Missing